Ep #28: Mastering Memcached Part 2: How It Really Works at Scale

Going beyond the basics: internal design choices that make Memcached fast

Ep #27: Breaking the complex System Design Components

By Amit Raghuvanshi | The Architect’s Notebook

🗓️ Aug 21, 2025 · Premium Post (#8) ·

Recap of Part 1

In the first part of this series, we explored the basics of caching and the challenges with naive in-memory approaches. We saw how Memcached solves these problems with a centralized, distributed cache, eliminating duplication, improving consistency, and boosting scalability. We also introduced Memcached itself, discussed why it’s widely used, highlighted its key features, and looked at the core components of its architecture. With that foundation in place, it’s time to go deeper and examine how Memcached actually works under the hood.

1. Memory Management Architecture

Memcached’s memory management is designed for efficiency and speed, using a slab allocation system and LRU (Least Recently Used) eviction to optimize memory usage.

1.1 Slab Allocation System

Memcached avoids traditional memory allocation (e.g., malloc1 for every item) to prevent fragmentation2 and improve performance. Instead, it uses a slab-based approach:

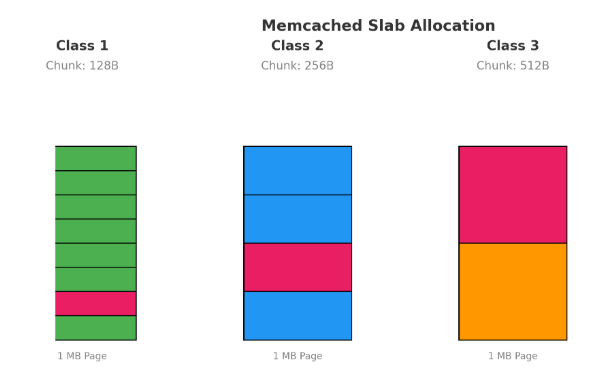

Slab Classes:

Memory is divided into slab classes, each responsible for storing items within a specific size range (e.g., 0-128 bytes, 129-256 bytes, etc.). The size ranges are configurable but typically grow exponentially (e.g., by a factor of 1.25) to accommodate various item sizes.Page Allocation:

Each slab class is allocated memory in pages, which are fixed-size blocks (default: 1MB). Pages are the unit of memory allocation from the operating system to Memcached.Chunk Management:

Each page within a slab class is subdivided into chunks of a fixed size, determined by the slab class. For example, a slab class for items up to 128 bytes might allocate chunks of 128 bytes. Each chunk can store one item, ensuring no internal fragmentation within the chunk.

📝 A Quick Update on Publishing Schedule

You may have noticed that until now I was publishing three articles per week — one free and two for paid subscribers. While I enjoyed that pace, it was becoming quite labor-intensive and left me with less time to do deeper research.

Going forward, I’ll be shifting to two main articles per week:

Tuesday → Free article

Thursday/Friday → Paid article

👉 Will this affect your knowledge or value? Absolutely not. In fact, this change allows me to spend more time researching and writing in-depth, high-quality deep dives — so you get richer insights instead of rushed content.

Will it always be exactly two per week? Not necessarily. The two deep dives are consistent, but you may occasionally see shorter weekend posts (not deep dives, but quick thoughts, insights, or resources).

This balance will help me keep the quality high while still maintaining a steady flow of content.

Efficient Allocation:

By pre-allocating memory in slabs and dividing it into fixed-size chunks, Memcached avoids the overhead of dynamic memory allocation and reduces external fragmentation. When an item is stored, Memcached selects the smallest slab class that can accommodate it, ensuring efficient memory use.Trade-Offs:

Internal Fragmentation: If an item is smaller than the chunk size, the remaining space is wasted (e.g., a 100-byte item in a 128-byte chunk wastes 28 bytes). This is a trade-off for avoiding external fragmentation.

Slab Rebalancing: Memcached does not dynamically reassign slabs between classes, so if one slab class fills up while others have free space, memory utilization may be suboptimal. Tools like the slab_automove3 feature (in newer versions) can mitigate this.

1.2 LRU (Least Recently Used) Management

Memcached uses an LRU eviction policy to manage memory when a slab class runs out of space:

Access Tracking:

Each item in a slab class is tracked in an LRU chain (a doubly linked list4). When an item is accessed (via get or set), it is moved to the head of the LRU chain, marking it as recently used.Eviction Policy:

When a slab class is full and a new item needs to be stored, Memcached evicts the item at the tail of the LRU chain (the least recently used item). This ensures that frequently accessed items remain in the cache while infrequently used ones are removed.Per-Slab LRU:

Each slab class maintains its own LRU chain, ensuring that small items (e.g., 100 bytes) don’t unfairly evict large items (e.g., 1KB) and vice versa. This isolation improves fairness and prevents one slab class from starving others.Expiration Handling:

Memcached also supports time-based expiration (via the exptime parameter in set commands). Expired items are lazily evicted during access or maintenance operations, reducing overhead. If an expired item is accessed, Memcached returns a cache miss.

2. Network Architecture

Memcached’s network architecture is optimized for low latency and high concurrency, leveraging event-driven I/O and a simple protocol.

2.1 Connection Handling

Event-Driven I/O:

Memcached uses the libevent5 library to handle network operations in an event-driven, non-blocking manner. Libevent monitors sockets for events (e.g., new connections, incoming data) and processes them efficiently, allowing a single thread to handle thousands of concurrent connections.Connection Pooling:

Client libraries maintain pools of persistent connections to Memcached servers to minimize the overhead of establishing new connections. The pool reuses existing connections for multiple requests, reducing latency and resource usage.Non-Blocking Operations:

All network operations are non-blocking, meaning the server can handle multiple requests concurrently without waiting for one to complete. This is critical for supporting high-throughput environments, such as web applications with millions of users.

2.2. Protocol Details

Memcached uses a simple, text-based ASCII protocol (with an optional binary protocol for efficiency) for client-server communication. The protocol is stateless and designed for low overhead. Below are the key commands:

🔒 This is a premium post: an extended deep-dive continuation of Part 1

👉 To access the full content, continue reading via the Premium Series below:

✨ If the free posts are adding value to your work and thinking, and you’d like more in-depth content delivered straight to your inbox, consider supporting my work by becoming a paid subscriber.

🔒 What You’ve Missed as a Free Subscriber this Month

Not a paid subscriber yet? Here's a glimpse of the premium content now live inside The Architect’s Notebook Premium Series - deep dives, practical breakdowns, and strategies to level up your system design thinking.

✅ Mastering Load Balancers: Beyond the Basics

✅ Inside DynamoDB — Part 3: Mastering the Foundations & Single-Table Principles

✅ Inside DynamoDB — Part 2: DynamoDB vs the World - Should It Power Your Next System?

✅ Building for Failure, Scaling with Confidence: Lessons from Chapter 1 of Designing Data-Intensive Applications

✅ Advanced Idempotency in System Design

✅ Monolith vs Microservices — A Trade-off Every Engineer Should Master

✅ Cracking Consistent Hashing: How It Works and Where It's Used

💡 Ideal for developers preparing for system design interviews, working in distributed systems, or building scalable APIs.