System Design Insight: Why “Just Add a Queue” Is Not Always the Right Answer

The hidden costs of asynchronous architecture

One concept, clarified in 2 minutes

By Amit Raghuvanshi | The Architect’s Notebook

🗓️ Mar 14, 2026 · Free Edition ·

The Knee-Jerk Reaction

In almost every System Design interview, when I ask a candidate, “The database is slowing down under write load, what do we do?” the answer is immediate.

“Put a queue in front of it.”

It is the standard engineering reflex. Decouple the producer from the consumer. Smooth out the spikes. It sounds perfect.

But in production, Queues are not a magic wand. They are a debt.

When you introduce a queue (like Kafka, RabbitMQ, or SQS), you aren’t just adding a buffer. You are transforming a simple synchronous problem into a complex asynchronous distributed system.

Here is why you should think twice before you “just add a queue.”

1. The Complexity Tax (It’s never “Just” a Queue)

In a synchronous system (HTTP REST), if the request fails, the user knows immediately. They see a 500 Error. They retry. Simple.

In a queued system, “Success” (202 Accepted) is a lie. It just means “We saved your request to a disk.” It does not mean we processed it.

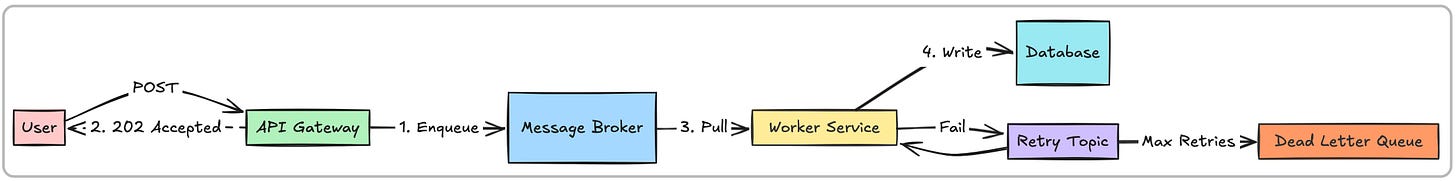

To make this production-ready, you don’t just need a queue. You now need:

A Retry Policy: What if the consumer fails?

A Dead Letter Queue (DLQ): What if it fails 5 times? Where does the message go?

A Replay Mechanism: How do you re-process the DLQ messages after you fix the bug?

Idempotency: What if the message is delivered twice?

Suddenly, your architecture looks like this:

You have tripled your operational surface area. You now have to monitor queue depth, consumer lag, and DLQ alerts.

2. The “Stale Data” Trap

Queues trade Latency for Durability. But sometimes, latency is the correctness constraint.

Imagine a ride-sharing app.

User requests a ride.

You put the request in a Queue.

The system is under heavy load; the queue lag is 5 minutes.

User gets tired of waiting and closes the app.

5 minutes later: Your worker processes the message and dispatches a driver.

The driver arrives at an empty street. You just wasted money and frustrated a driver because you processed a request that was no longer valid.

The Lesson: If the value of the request decays rapidly over time (like real-time bidding or ride-hailing), a queue might be the wrong choice. Fast failure (dropping the request) is often better than slow success.

3. The Debugging Nightmare

Debugging a synchronous monolith is easy: follow the stack trace.

Debugging a distributed queue is a detective story.

Customer Support: “My order is stuck in ‘Pending’.”

You: “Okay, let me check the API logs... okay, it was enqueued. Now let me check the Worker logs... nothing. Is it stuck in the queue? Is the partition blocked? Did it go to the DLQ?”

You lose the Traceability of the transaction unless you implement complex distributed tracing (like OpenTelemetry) across boundaries.

4. The “Death Spiral”

Queues protect your database from spikes, but they don’t protect themselves from sustained overload.

If your consumers can handle 100 messages/sec, but your producers are pushing 110 messages/sec, your queue grows infinitely. Eventually:

You run out of memory/disk on the broker.

Latency increases to the point where data is useless.

You have to flush (delete) the queue to recover, losing data.

Summary: When to Queue?

Don’t add a queue just to feel “scalable.”

Use a Queue when: You need to bridge two systems with significantly different throughput capabilities (e.g., fast ingestion, slow PDF generation) AND the task is not time-sensitive.

Avoid a Queue when: The user needs an immediate answer, or the data becomes worthless after a few seconds.

Sometimes, the best architecture is just a Load Balancer and the courage to return a 429 Too Many Requests.

Until next time, The Architect’s Notebook

If this post made you rethink queues, share it with someone designing systems right now.

And if you want more real-world architecture insights like this — short lessons and deep dives — subscribe to The Architect’s Notebook.

📖 Before You Close This Tab

If you found today’s breakdown valuable, you should look at my new book: The Architecture of Neural Scale: Volume 1(A) - Foundations of AI Systems.

The tech world is currently obsessed with treating LLMs as infinite black boxes. You send a prompt, you get a response. But what happens when that abstraction leaks? What happens when latency spikes or your cloud bill explodes?

This book strips away the vendor magic. It is a ground-up engineering guide to the physical reality of AI. We start at the mathematical foundations of neural networks and plunge straight into the “metal layer.” You will learn how to calculate VRAM, navigate memory bottlenecks, and design the infrastructure required to serve billions of parameters without your systems catching fire. No academic fluff. Just production engineering.

The 10% launch discount expires this weekend. If you are ready to graduate from API wrappers to actual AI architecture, grab your copy below.