Ep #82: The Distributed Lock Nightmare (Part 2): The Only Way to Fix Race Conditions

Moving beyond Redis: How to use ZooKeeper, Etcd, and Fencing Tokens to guarantee data safety.

Breaking the complex System Design Components

By Amit Raghuvanshi | The Architect’s Notebook

🗓️ Feb 12, 2026 · Deep Dive ·

The Sword of Truth (Fencing Tokens)

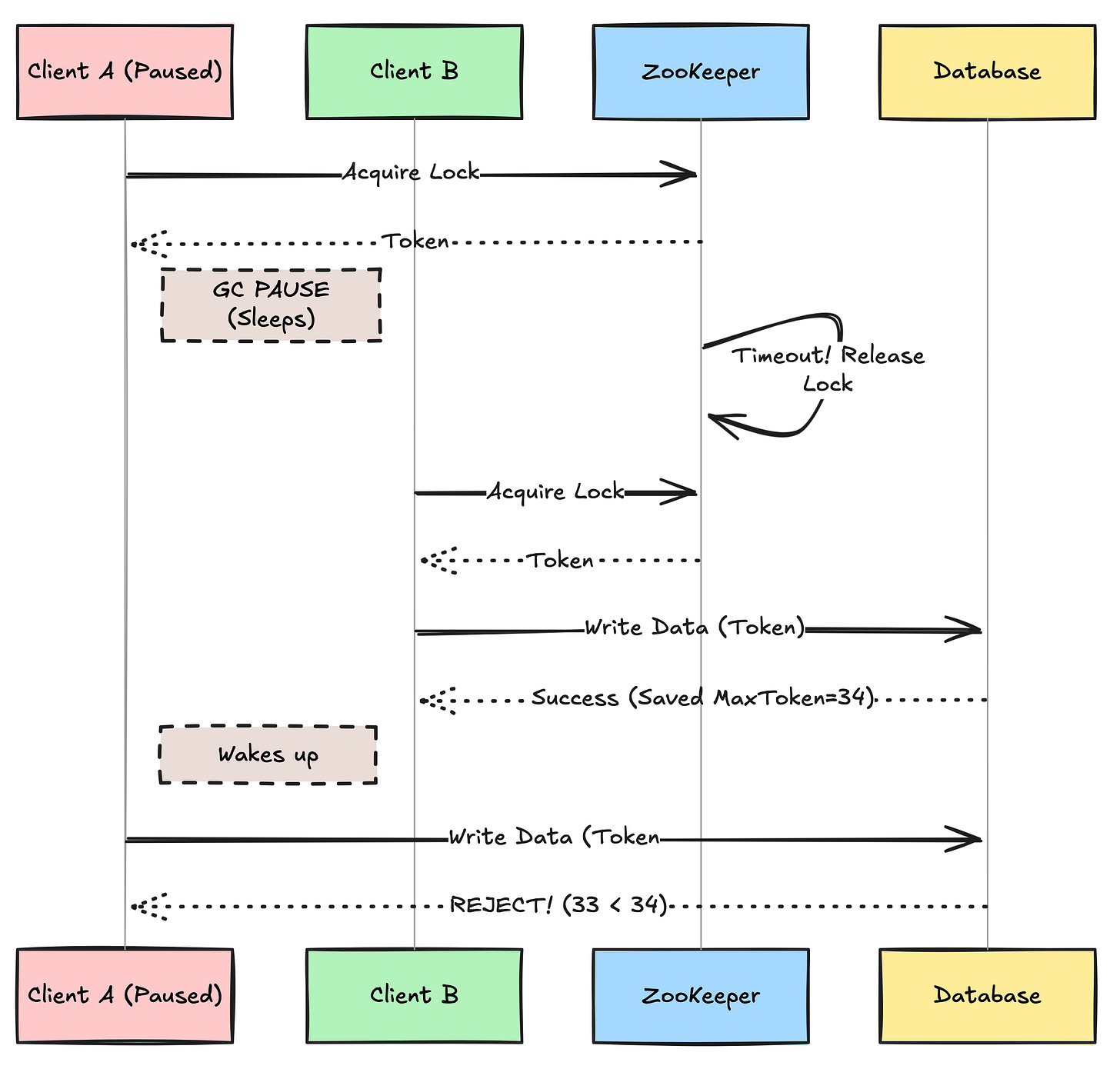

In Part 1, we learned that even with ZooKeeper or Etcd, a “GC Pause” can cause a client to lose its lock without knowing it. Client A creates a lock. Client A falls asleep (GC). ZooKeeper expires the lock. Client B takes the lock. Client A wakes up and overwrites Client B’s work.

This is a fundamental problem: Clients cannot trust themselves. A client can never know if it still holds the lock at the exact moment it writes to the database.

The Solution: The Fencing Token

To solve this, we need the resource itself (the Database) to act as the final judge. When you acquire a lock in ZK/Etcd, the server gives you a monotonically increasing number (an Epoch or Revision ID).

The Protocol:

Client A gets Lock #33.

Client A falls asleep. Lock #33 is released.

Client B gets Lock #34.

Client B writes to the Database with Token #34.

Client A wakes up. It tries to write to the Database with Token #33.

The Database Rejects the Write: “Sorry, I have already processed Token #34. Token #33 is too old.”

This is the key takeaway: A Distributed Lock is useless unless the resource you are protecting checks the lock token.

You cannot trust the client to check the lock. You must force the resource (Database) to validate the token.

Part 1: The Underdog: Database Advisory Locks

Before you spin up a ZooKeeper cluster (which is operational hell), look at your database. Postgres has a feature called Advisory Locks. These are locks that don’t lock data rows; they lock abstract “numbers.”

-- SQL

-- Try to acquire lock #12345

SELECT pg_try_advisory_lock(12345);Pros:

Transactional: The lock is tied to your DB transaction. If the transaction commits or rolls back, the lock releases automatically.

Zero Ops: No new infrastructure to manage.

Perfect for DB tasks: If you are locking to prevent a DB race condition, using the DB itself for locking removes network latency.

Cons:

Connection Pooling: Each lock holds a DB connection. If you have 10,000 locks, you need 10,000 connections (unless you use transaction-level locks carefully)

🔒 Subscribe to read the Code Implementation & Decision Matrix

How do we implement Fencing Tokens in C# or Java? How do we handle network partitions where the lock server splits in half?

In the rest of this deep dive, we will cover:

Code Implementation: A C# example of a

FencedOperationthat validates tokens against the database.Postgres Advisory Lock Pattern: A safer, infrastructure-free way to handle locking for startups.

The Decision Framework: A flowchart to help you choose between Redis, ZooKeeper, and Postgres.

Testing: How to write a Chaos Engineering test to prove your lock actually works.