Ep #108: Change Data Capture (Part 2): The Transactional Outbox & C# Implementation

How to write bulletproof Kafka consumers for CDC, eliminate distributed transactions, and the operational gotchas of log-tailing.

By Amit Raghuvanshi | The Architect’s Notebook

🗓️ May 14, 2026 · Deep Dive ·

The Distributed Transaction Nightmare

In Part 1, we learned how Change Data Capture (CDC) securely streams database changes to Kafka using the Write-Ahead Log (WAL). This solves the problem of keeping a search index in sync with a primary database. But CDC solves an even bigger problem in microservices: The Distributed Transaction.

The Microservice Dilemma Imagine you are building an E-Commerce system. You have an OrderService and an InventoryService. When a user places an order, you need to do two things:

Save the Order to the

OrderServicedatabase.Publish an

OrderCreatedevent to Kafka so theInventoryServicecan reserve the items.

The Bad Code:

// 1. Save to database

await _dbContext.Orders.AddAsync(newOrder);

await _dbContext.SaveChangesAsync();

// 2. Publish to Kafka

await _kafkaProducer.ProduceAsync("orders-topic", newOrderEvent);Just like the Cache/Search problem in Part 1, this is a Dual Write. If the database saves, but Kafka is temporarily down (or the network drops), you have an Order in the database, but the Inventory service never hears about it. The customer pays, but the items are never reserved. To fix this natively, you would need a “Two-Phase Commit” (2PC) distributed transaction. Distributed transactions are slow, block resources, and are notoriously prone to failure.

The Solution: The Transactional Outbox Pattern What if we could guarantee that the Kafka message is only sent if the database transaction commits, without using a 2PC?

One of the most powerful uses of CDC is implementing the Transactional Outbox Pattern. Instead of a microservice calling another service over REST (synchronous, brittle) or publishing directly to Kafka (dual-write, unsafe), Service A writes an event to an outbox table in the same database transaction as its business data. CDC then picks up that row and publishes it to Kafka.

We can, by using CDC. Instead of sending the message to Kafka directly over the network, we write the message to an Outbox Table inside the same database, inside the same SQL transaction.

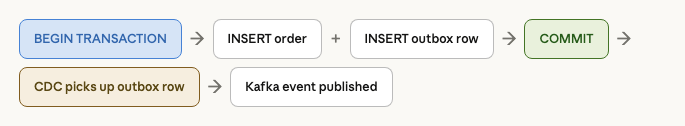

BEGIN TRANSACTIONINSERT INTO orders ...INSERT INTO outbox_events (payload) VALUES ('{"event": "OrderCreated"}')COMMIT

Because both tables live in the same Postgres database, this is a standard, lightning-fast, local ACID transaction. If it rolls back, the outbox message disappears. If it commits, the outbox message is durably saved. Then, Debezium (our CDC tool) reads the WAL, sees the insert into the outbox_events table, and publishes that exact payload to Kafka.

You have just achieved guaranteed, exactly-once message publishing without a distributed transaction.

The guarantee: the message is only published if the business transaction committed. If the transaction rolls back, the outbox row never exists, so the message is never sent. This eliminates the race condition that plagues dual-write architectures entirely.

// Outbox table — sits in the same DB as your business tables

CREATE TABLE outbox_events (

id UUID PRIMARY KEY DEFAULT gen_random_uuid(),

topic VARCHAR(255) NOT NULL,

payload JSONB NOT NULL,

aggregate_type VARCHAR(100) NOT NULL,

aggregate_id VARCHAR(100) NOT NULL,

created_at TIMESTAMPTZ DEFAULT now(),

sent_at TIMESTAMPTZ -- set by CDC consumer after publishing

);

// Application code — atomicity is the default, not the special case

await using var tx = await _db.BeginTransactionAsync();

var order = new Order { ... };

_db.Orders.Add(order);

_db.OutboxEvents.Add(new OutboxEvent

{

Topic = "order.created",

AggregateType = "Order",

AggregateId = order.Id.ToString(),

Payload = JsonSerializer.Serialize(order.ToEvent())

});

await _db.SaveChangesAsync(); // Both writes atomic

await tx.CommitAsync(); // CDC picks up the outbox row, publishes eventThe Full Architecture Flow

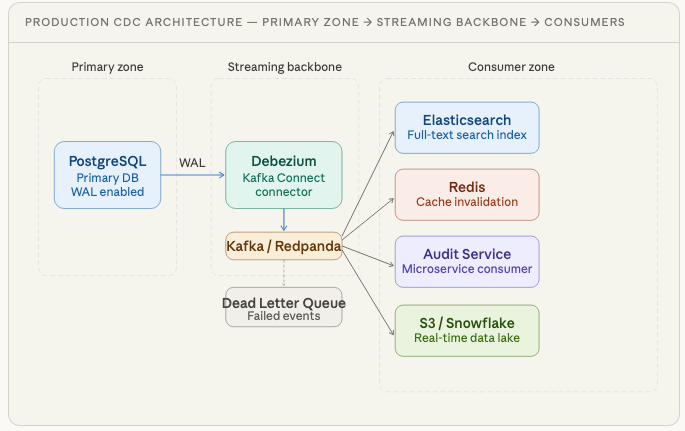

Here is the complete end-to-end topology that powers CDC at scale. Study this topology — it’s the backbone of how Netflix, Uber, LinkedIn, and virtually every data-intensive company moves state between systems today.

🔒 Subscribe to read the C# Implementation & Best Practices

You have the events flowing into Kafka. Now you have to write the code to consume them. If your consumer crashes halfway through, how do you prevent data corruption? How do you handle schema changes when someone drops a column in the database?

In the rest of this deep dive, we will cover:

C# Implementation: A production-grade Kafka Consumer in .NET utilizing manual offsets and LSN-based idempotency.

Dead Letter Queues: How to handle “Poison Pills” so a single bad event doesn’t halt your entire pipeline.

The Gotchas: Schema evolution, Replication Lag, and the limits of eventual consistency.

The CDC Tooling Landscape: Debezium vs. AWS DMS vs. Managed Connectors.